|

Featured in: Pittsburgh Post-Gazette Pop City Blog PittsBlog Tech Burgher Blog and on AM 1360 WMNY The American Entrepreneur 2/28/09 “Ron Morris talks with Todd Waits” |

Semiotic Technologies’ White House project seems simple at the surface but there is a lot going on underneath the hood; I’ll discuss the technology, problems, solutions, and results of building a 2.5D world in Flash.

Semiotic Technologies partnered with Argentine Productions to deliver an educational piece for the White House Historical Association. Argentine Productions was responsible for all of the sound work and writing, Semiotic Technologies built the rest of the experience. The Semiotic Team:

| Todd Waits | CEO, Producer |

| Evan Miller | Art Director |

| Stephen Calender | Chief Engine Architect |

| Nicole Epps | Lead Programmer |

| Daniel Coes | 3D Artist, Animator |

The production time on the project was about two and a half months. The experience focuses on the events and opinions surrounding the emancipation proclamation. Being an educational piece, its goal was to make history come alive, reaching and captivating audiences in a way no traditional medium could. Being historically accurate was essential, which put considerable limits on game design. Sometimes gaming attributes like puzzles, challenges, or tough decisions can be barriers to learning the content and being accessible to a large audience. Since this world lacks those kinds of game mechanics, I prefer to refer to it as an ‘experience’ or ‘world’.

|

Visitors to the Lincoln White House are able to speak to important historical figures and find Easter-egg factoids in the world (top two images). Most of the images in the Easter-eggs have never been published before. Then there are three menus; the cast of characters at the White House, a character menu where you can change your role in the experience, and a map page with information about the areas of the White House in your visit (middle, second from bottom, and bottom image respectively). Even with the project being as simple as walking around and talking to people, there was a lot to manage. There were 10 key figures, 5 avatar characters, and a host of other characters that art team had to put together (this project was more involved for the art team than the programming team). People can change their avatar at anytime, and they are encouraged to do so since you get different responses depending on the character you are playing. It was definitely an organizational challenge to manage who says what to whom in which room. The Emancipation Proclamation was signed on January 1st, 1863, New Years Day, a day where traditionally (at least during this time period) everyone was welcome to come and meet the president. We were not going for Assassin’s Creed quality crowd dynamics, but there was a need to make the White house feel crowded and busy yet still easy to navigate. Then there is the engine that runs the world.

In order to talk about the engine I need to start with its inception. That project was Cents City (featured on the right, below), where players navigated an isometric perspective of a city. All of the angles made a custom collision system necessary. |

|

The collision system coupled with the depth manager is what makes these worlds possible. By design, Semiotic Technologies focused on building an engine that facilitated the construction of immersive worlds (the art team also had fully rigged models ready to go in order to make our two and a half month development timeline possible). The engine is composed of custom UI components, development tools, data structures, a depth management system, custom collision detection, and a system to manage multiple scenes / room / areas of the world that we termed ‘levels’. The main reason that the programming team had lighter load than the artists was because the engine was already built and there was a significant amount of code carried over from previous work.

If you have read my Obstacles for Flash Game Development article, you probably have an idea of what I wanted for my first implementation. I did not get to do a per-pixel collision system, nor does it handle rotations or scaling well. Economically, we did not have enough time to do something that detailed, and ultimately we did not need something that powerful. |

|

What I did construct was a grid based collision model. Levels could have different grid cell sizes, so while you could max the system out by setting the cell size to one pixel, it usually made the world grind to a halt (just too many grid cell look ups). The cell sizes we used most often were 5 and 10 pixels. Similarly, using a grid imposes a maximum size of the levels, we were not comfortable making our levels larger than 2400 by 1800 pixels (43,200 cells with a 10 pixel by 10 pixel cell size). Another feature that I wanted, but did not have time to implement, was streaming levels. All levels are in memory all of the time, which can be taxing on memory resources. If levels were streamed in as needed, and removed when unused, in theory we could make worlds of infinite size. While I point out the engine’s, and what seem like my own, shortcomings; we never intended to build the complete engine all at once, it was to be spread out across several projects.

Perhaps most important to a collision system is how it resolves it collisions. Generally there are two camps a posteriori where you let objects overlap and resolve the collision after it happens and a priori where you look for collisions before you move objects. I might catch some flak from the game community since I prefer a priori when a posteriori is general implemented. I think that it is easier to resolve collisions as they happen than to untangle collided objects. Second, if I was going to add in any kind of physics systems into the engine at a later point, overlapping (i.e. mass sharing objects) could cause some serious problems.

Collision systems need to be fast, so I spent a long time thinking about and implementing different strategies for parsing space for collisions. At one point, the system ran an adaptive algorithm, picking the best algorithm for different classes of shapes. In the end, I just went with brute force. Brute force, checking every grid intersection or pixel, is the only algorithm that works for every possible shape, it has constant speed, and it uses the least amount of memory. It was far simpler to have just one algorithm and every other alternative had a worst case scenario that was as slow as brute force, slower, or sacrificed accuracy for speed. I did leave in an optimization if the object was found to be rectangular, which is essentially the trivial case where you know that the object’s bounding box and collision area are the same (as long as the object has not been rotated).

|

This collision system, as simple as it is, was remarkably powerful compared to Flash’s axis aligned bounding boxes. Every object added to a level has a collision sprite assigned to it, and there is a classification to whether it is a stationary object or dynamic object (top). All static objects are mapped and stored into the level’s grid (black lines in the middle image). Then dynamic objects are handled against the level and each other. Instead of having to arrange and keep track of a bunch of rectangles in angled or curved environments, we could just draw where we wanted the collisions to be and only check against one object (collisions for a circular room in the bottom image).

Objects change depth automatically based on their position in a level. I do not believe I did anything novel here, I think I did the same thing that I have seen in other implementations. I use the registration point of the object, the object’s depth priority is calculated based on its position, and then a priority list class that keeps all of the objects sorted according to their priority. I used a variation of insertion sort in my priority list class since the depth order stays mostly in order across frames. |

|

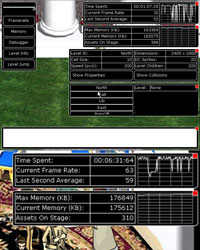

You already saw one of the engine tools when I discussed the collision system. In all, there are five runtime tools: a frame rate meter, memory meter, debugger, a teleportation tool to jump to places in the world, and the level information and collision tool. The frame rate and memory meters are hard to see so I zoomed in on them (that and I wanted to show you that my frame rate really was not that erratic, video capturing the game was a bit intensive). Many of you might think that a debugger is unnecessary since the Flash editor has a debugger, but I have found great use for it. |

Some things like steaming and network trouble shooting need the swf to be embedded in a webpage. Furthermore, the Flash debugger has a limit on how much text it can hold. I was able to build these tools in about a week and they were well worth the effort (admittedly, I did repurpose a graphing widget that I had previously built). They saved so much time diagnosing problems that they more than paid for themselves.

Even with all the tools and the engine at our disposal, not everything went as planned. I did all of my benchmarking after this project was over. Looking back, some of the character assets should have been bitmaps instead of vector artwork. However, working with vectors was far easier for the characters since they each have 30 frames, 30 images associated with each character would have been painful to deal with in the library. I am still bothered by the level manager class. The level manager is critical to the engine, but each game or experience tends to have its own transitions between levels. It is just frustrating that an engine component needs that much editing since the whole point of an engine is that it is a highly reusable code base. Part of my irritation stems from working with Flash’s tween objects for transitions since they are difficult to lay out in a series. Another, inevitably messy, code issue was the controls for character animations and movement. There are stationary characters, marching soldiers, characters that move randomly, some that just look around, and some with their own unique animations. Even something as simple as random movement needs controls so that NPCs avoid doorways and tight spots. All of that code is amalgamated into the NPC class. I have no doubt that is where the code belongs; it is just egregiously long when it is combined with the animation playback controls.

I know that the Semiotic team, Argentine Productions, and the White House Historical Association are all happy with the results. Any project that is delivered on time and on budget is a good project. I was thrilled with our artists; they really did make my coding look good. Not only were they able to create high quality assets, but they were so skillful at image compression that the load time is under 40 seconds for most users and we never had to worry about memory usage. Everyone involved was fantastic to work with; I hope that there are opportunities in the future to work with them again. I am biased since I worked on the project, but I do believe stepping into our White House is much more engaging than reading a history book.

Thanks for reading, and remember, we are all in this together.

|

|

Pingback by Flash Game Development Inter-web mash up: Feb 17, 2009 « 8bitrocket.com — August 25, 2013 @ 3:54 pm

[…] for you if you are making a game for the 4K game contest. (boy do I need them!)-Stephen Calender's Postmortem on his Lincoln Era Whitehouse Project-Panayoti's excellent Mochiland article on creating games in ad […]